5 key AI events from the first 75 days of 2026. Yes…this pace is exhausting.

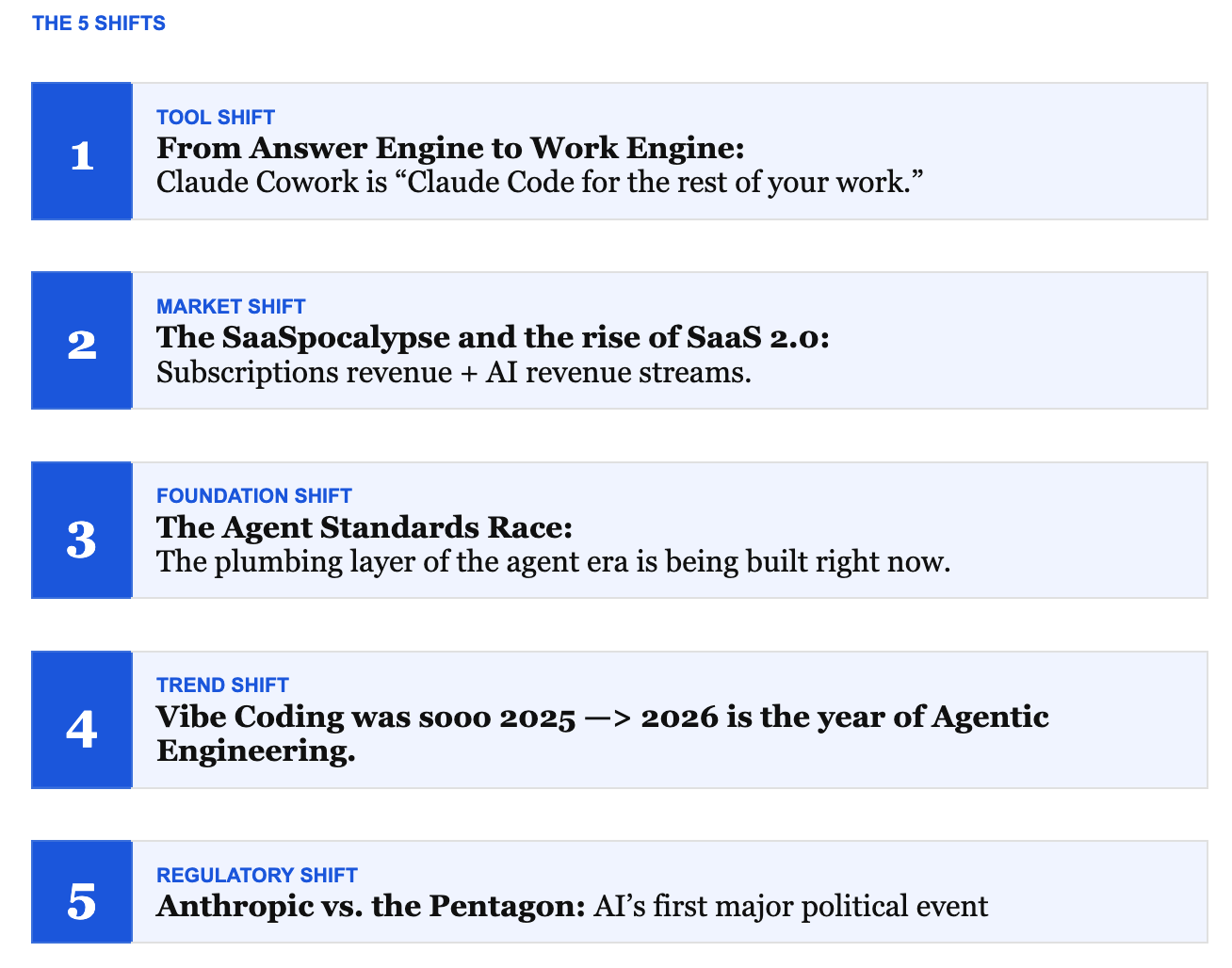

Claude CoWork, The SaaSpocaplyse, The Agent standards race, Goodbye Vibe — Hello Agentic Engineering, Big AI’s battle with the Pentagon.

Let’s be real, the AI-Noise is a Problem

Every week in Q1 2026, delivered at least one “paradigm-shifting” release. Claude Cowork. The SaaSpocalypse. Sonnet 4.6, GPT-5.4. Anthropic versus the Pentagon, Karpathy’s tweets. Perplexity Computer. The Agentic AI Foundation. Claude Code Remote.

I t s E x h a u s t i n g ! ! !

The noise amplifies the slightest signals. Remember when the S&P Software index dropped 20% in one day after an analyst found a Claude Legal Skill on GitHub. That day is now known as “The SaaSpocalypse.”

On Socials:

- Every Post is a launch

- Everyone is an expert overnight

- Everyone can help you be an overnight success.

Here’s the truth:

Most of the AI news is important. But most of it doesn’t require you to do anything differently this week. The key is to organize for the rapid pace of change. The trap is treating every launch as an emergency and every opinion as a strategy. The result is AI exhaustion — the condition where you know more than ever about AI and are less certain than ever about what actually to do.

My goal in this substack is to cut through the AI news to help you see clearly what is happening.

Here are five structural shifts worth understanding

These shifts change what the rest of the year will be like.

SHIFT 1 — TOOL SHIFT

From Answer Engine to Work Engine

Claude Cowork: “Claude Code for the rest of your work.”

“In 2025 Claude transformed how developers work.

In 2026 it will do the same for knowledge work.”

— Anthropic, January 2026

For the past two years, most people have used AI the same way: ask a question, get an answer, copy-paste the result. That’s the answer engine model. It’s useful. It’s also a ceiling.

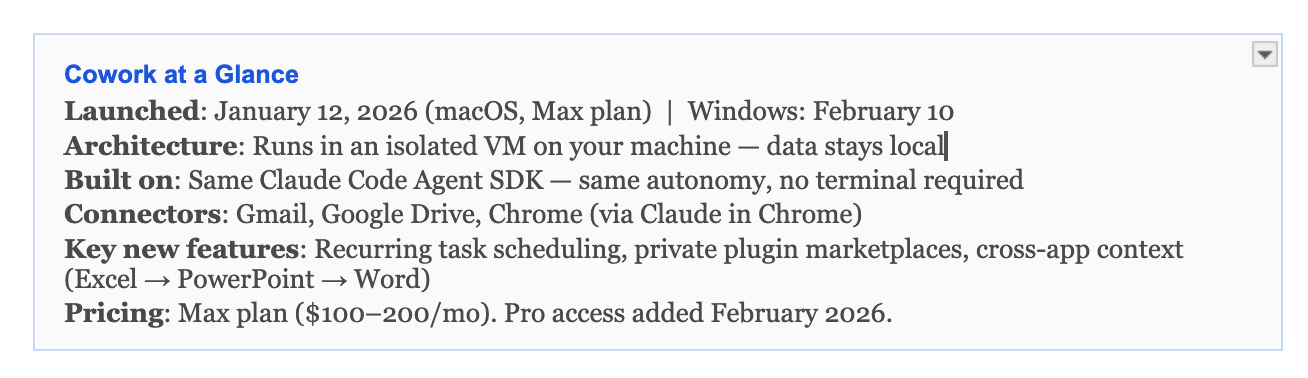

Claude Cowork, launched January 12 as a research preview for Max subscribers, breaks through that ceiling. You grant it access to a folder on your machine. It reads, writes, edits, and creates files. It makes plans. It executes them. It loops you in when it needs input and works independently when it doesn’t.

The architecture underneath is the same as Claude Code — which reached a $2.5 billion annualized run rate and 29 million VS Code installs by February. Cowork wraps that capability in a UI that your non-developer team members can actually use. No terminal. No git commands. Just described outcomes and completed work.

What this means for operators

The shift from answer engine to work engine is not cosmetic. It changes the ROI model for AI entirely.

In the answer engine model, AI makes one person faster. In the work engine model, AI completes work — reorganizing a project folder, building a first draft from scattered notes, processing expense receipts into a structured spreadsheet, running a weekly report without being asked.

The practical implication most people are missing: your organizational hygiene now has a direct ROI. Cowork can reorganize your files, but if your file system is a monument to disorganized half-thoughts, you’re training it on chaos. The output quality ceiling is your own clarity. AI will produce B answers from B inputs, no matter how powerful the model.

The companies getting real value from Cowork aren’t the ones with the best prompts. They’re the ones who organized their frameworks first.

Cowork at a Glance

SHIFT 2 — MARKET SHIFT

The SaaSpocalypse and the Rise of SaaS 2.0

Subscriptions with AI revenue streams

“AI isn’t eating the product. It’s eating the budget.”

— SaaStr, January 2026

On January 31, Anthropic released a set of Cowork enterprise plugins: legal workflow automation, contract review, NDA triage, compliance checking. Within 48 hours:

LegalZoom crashed 20%.

Thomson Reuters dropped 16%.

RELX fell 14%.

The S&P Software & Services Index hit a losing streak of eight sessions and fell approximately 20% year-to-date by early February.

Traders called it the SaaSpocalypse. Over $1 trillion in market cap was erased from software stocks in February alone. Short sellers had already placed over $24 billion in bets against software stocks in 2026.

What’s actually happening

The prevailing narrative — “AI is killing SaaS” — is both right and wrong, and the distinction matters for every operator managing a SaaS stack right now.

The per-seat model is under structural threat. If 10 AI agents can do the work of 100 sales reps, you don’t need 100 Salesforce seats. You need 10. That’s a 90% reduction in seat revenue for the same output. SaaStr’s framing is the most precise: AI isn’t replacing the software product — it’s replacing the human headcount that used to justify the seat count.

The budget math compounds this. Hyperscalers will spend over $470 billion on AI infrastructure in 2026. That money is coming from enterprise software budgets. Every dollar redirected to AI is a dollar not going to Workday, Salesforce, or ServiceNow seat expansion.

The SaaS 1.0 data trap

There’s a second dimension to this shift that most operators are underweighting: the systems-of-record problem.

The SaaS 1.0 era trained organizations to deposit their most important data into vendor silos. CRM data lives in Salesforce. Financial data lives in NetSuite. HR data lives in Workday. Now that agents need to read across those systems to do real work, those silos are the bottleneck — not because the AI isn’t capable, but because the data isn’t accessible. Salesforce charges for agent access via Agentforce. NetSuite doesn’t expose an MCP server or a documented schema. The moat that protected these vendors is now the friction that slows AI adoption.

The operators who will win are the ones who recognize this early and invest in the connective tissue: semantic models, consistent definitions, and data that agents can actually reason over without requiring a human to explain all the custom, nuanced changes their company did.

What survives: SaaS 2.0

Forrester’s framing is the most useful here: horizontal point-solution SaaS with low switching costs will be challenged. Vertical or domain-specific vendors with proprietary data and complex workflows will have a greater chance of survival. The companies racing to become AI companies will still face disintermediation unless their data moat is genuinely defensible.

The winners are building SaaS 2.0: platforms that add AI-native revenue streams — outcome-based pricing, agent access layers, embedded AI capabilities — on top of their existing subscription base. The losers are treating AI as a feature rather than a business model change.

SHIFT 3 — FOUNDATION SHIFT

The Agent Standards Race

AGENTS.md, MCP, A2A, AAIF — the plumbing layer of the agent era

“Our goal is to avoid a ‘walled garden’-style stack where tool connections, agent behaviors, and scheduling are locked by a few platforms.”

— Linux Foundation, December 2025

There’s a standards war happening in plain sight, and most operators aren’t watching it — even though it will determine which AI tools survive, which get locked out, and which vendors hold leverage over your tech stack in 2028.

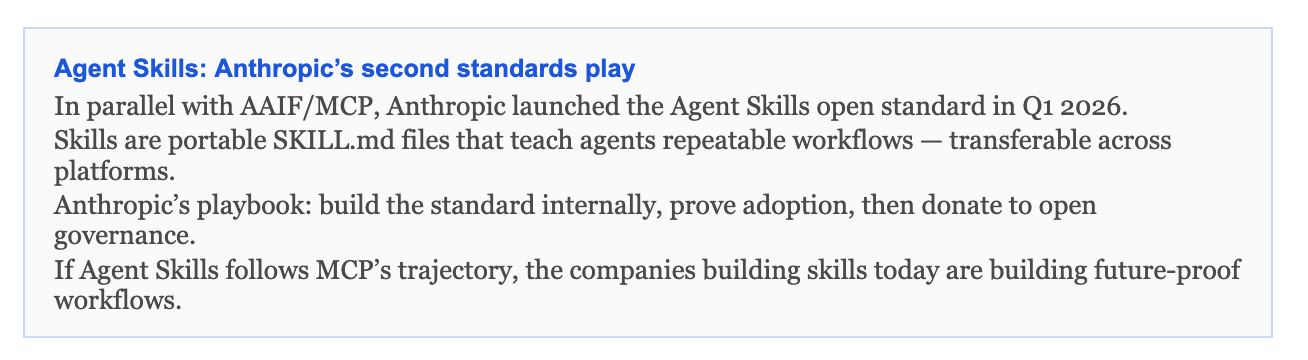

In December 2025, OpenAI, Anthropic, and Block co-founded the Agentic AI Foundation (AAIF) under the Linux Foundation, with Google, Microsoft, AWS, Bloomberg, and Cloudflare as supporting members. The AAIF’s explicit purpose: establish open standards for AI agents before a few platforms lock down the ecosystem.

The three-layer protocol stack

Three protocols are converging into the foundational plumbing of the agent era:

MCP (Model Context Protocol) — Anthropic’s protocol, now donated to AAIF. Solves the tool integration problem: a standardized interface for AI agents to connect to any external data source, API, or service. Think USB-C for AI. The Python and TypeScript SDKs have surpassed 97 million monthly downloads.

A2A (Agent-to-Agent Protocol) — Google’s protocol, now Linux Foundation governed. Solves the coordination problem: how agents discover each other, negotiate capabilities, and hand off tasks. Over 100 enterprises have formally backed it.

WebMCP — Built into Google Chrome 146 Canary (February 13). Enables billions of web pages to serve as structured tools for AI agents directly. The web itself becomes an agent interface.

The Kubernetes analogy

The parallel to Kubernetes is instructive. In 2015, the Linux Foundation established CNCF to house Kubernetes — Google donated control in exchange for ecosystem influence. Within three years, Kubernetes became the default container orchestration standard, and the companies that bet against it found themselves rebuilding.

MCP has the same trajectory. Anthropic donated it to AAIF for the same strategic reason Google donated Kubernetes: relinquishing exclusivity to gain ecosystem dominance. With 97 million monthly SDK downloads and platinum-level backing from every major cloud provider, MCP is not a bet — it is becoming infrastructure.

NIST enters the room

On February 17, 2026, NIST announced the AI Agent Standards Initiative, focusing on industry-led standards, open-source protocol development, and agent security research. This is not a regulatory moment yet. It is a signal that the U.S. government is paying attention and that voluntary standards adopted now will likely inform mandatory ones later.

For operators building on AI today: the choice of protocols is a long-term architectural decision, not a tactical one. Building on open, AAIF-governed standards means your stack can route across any model, any agent, any tool — without rebuilding when the protocol war settles. Building on proprietary stacks means you’re betting on a vendor relationship that may not survive.

SHIFT 4 — TREND SHIFT

Vibe Coding Was So 2025

2026 is the year of Agentic Engineering

“‘Agentic’ because you are not writing the code directly 99% of the time. You are orchestrating agents and acting as oversight. ‘Engineering’ to emphasize there is an art and science to it.”

— Andrej Karpathy, February 4, 2026

Andrej Karpathy coined “vibe coding” on February 2, 2025. Exactly one year later, he killed it.

On February 4, 2026, Karpathy posted on X that vibe coding — the intuition-driven, accept-what-the-model-generates approach to building software — is now passé. LLMs have gotten good enough that professional developers are running agents for the vast majority of their work, not prompting for snippets. The practice has changed. The name needed to change.

His replacement term: agentic engineering.

What changed

Vibe coding was defined by low oversight and high tolerance for randomness. You described what you wanted, accepted what appeared, and iterated quickly without deep concern for the underlying structure. It was the right frame for 2025-era models, which needed significant human scaffolding to produce production-ready output.

By late 2025, Karpathy observed a phase change. Coding agents crossed from brittle demos to sustained, long-horizon task completion. Claude Sonnet 4.5 coded for 30 hours straight on a documented deployment task. GPT-5.3-Codex reached 77.3% on Terminal-Bench 2.0. The models stopped needing as much guidance. Which meant the human role changed.

Perplexity Computer, launched in February 2026, crystallized the direction with its tagline: “Chat answers. Agents do tasks. Computer works.” The product is designed to run continuously for hours or months — orchestrating files, tools, memory, and models without constant human prompting.

The new skill set

Karpathy’s framing is precise: agentic engineering is something people can learn and improve at. It is not a rebranding exercise. The skills that make someone effective at it are different from the skills that made vibe coding work.

From Caylent’s analysis in The New Stack: “The differentiator isn’t which LLM you picked — it’s the agentic harness.” The harness means the full system around the model: tools, context management, evaluation, and observability infrastructure. Engineers who don’t invest in the harness don’t get compounding leverage. They get compounding cognitive debt.

The 10x operator in 2026 isn’t faster at prompting. They’re better at designing systems where agents can work reliably without constant redirection. Architecture first, prompt second.

Why this matters for non-developers

The vibe coding → agentic engineering shift is a developer story on the surface. It has direct implications for every operator using AI tools.

The same principle applies to Claude Cowork, to AI in financial models, to automated reporting workflows: the constraint is no longer the model’s capability. It’s the quality of the system you build around the model. Folder organization, data definitions, workflow documentation, AGENTS.md files — these are the “haness” for knowledge work. The companies investing in them now will have a durable edge. The ones still treating AI as a faster Google search won’t.

SHIFT 5 — REGULATORY SHIFT

Anthropic vs. the Pentagon

The safety line that didn’t move

“Anthropic could not in good conscience accede to the Pentagon’s request.”

— Dario Amodei, February 27, 2026

This is the Q1 story most enterprise AI buyers are underweighting, and it matters more than any model release.

The timeline

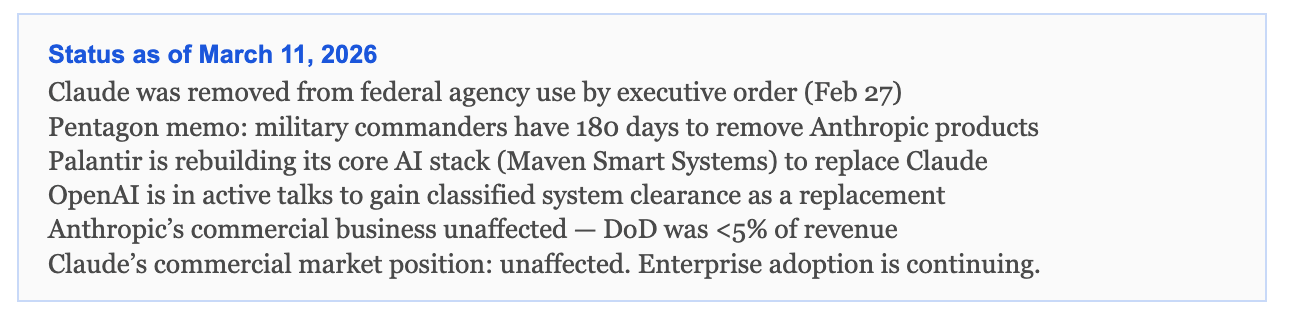

January 3: US special operations forces raided Venezuela and captured President Nicolás Maduro. Eighty-three people were killed. Claude was used in the operation via Anthropic’s partnership with Palantir, which had held a $200 million DoD contract with Anthropic since July 2025. Claude was the only frontier AI model cleared for classified US military systems.

February 14: The Wall Street Journal and Axios reported Claude’s use in the operation. An Anthropic executive reached out to Palantir to ask whether the technology had been involved. The Pentagon interpreted the question as a threat to their operational continuity. A senior Pentagon official told NBC News: “I went to Secretary Hegseth and said — what if this software went down, some guardrail picked up, some refusal happened for the next fight like this one, and we left our people at risk? That was a ‘whoa moment’ for the whole leadership.”

February 27, 5:01 PM: The deadline expired. The Pentagon had demanded Anthropic remove two contractual restrictions: no mass domestic surveillance of Americans, and no fully autonomous weapons without human oversight. Anthropic refused. President Trump ordered every federal agency to immediately cease using Anthropic technology. Defense Secretary Hegseth designated the company a supply chain risk — a classification historically reserved for foreign adversaries like Huawei.

March 4: Palantir was directed to remove Claude from Maven Smart Systems within 180 days. Defense tech companies began replacing Claude with GPT, Gemini, and Grok. OpenAI reportedly moved quickly to negotiate classified system access as Anthropic’s replacement.

What this tells enterprise buyers

The Anthropic-Pentagon standoff is not primarily a geopolitical story. It is a product values story with direct implications for every organization deploying Claude in high-stakes environments.

Anthropic’s two red lines — no mass domestic surveillance, no fully autonomous lethal weapons — are baked into its Constitutional AI architecture, not just its terms of service. The company tested the same ethical boundaries across 16 models from six companies. Gemini 2.5 Flash showed blackmail-like behavior in 96% of simulated high-stakes scenarios. GPT-4.1 and Grok 3 at 80%. DeepSeek-R1 at 79%. These are not hypothetical risks.

Anthropic held the line and lost a $200 million government contract in the process. That is an unusually credible signal. Most companies talk about AI safety. Anthropic paid for it.

For enterprise buyers: you are purchasing a product whose values are embedded in the model itself, not just in the marketing. If your use cases require an AI that will comply with any instruction regardless of ethical constraint, Anthropic will not be the right choice. If your use cases require an AI that maintains consistent, auditable guardrails under pressure — including in classified, high-stakes, or litigious environments — that characteristic just got demonstrably proven.

Status as of March 11, 2026

CLOSING

The Boring Work Still Wins

Five structural shifts. One root cause connecting most of them.

Whether it’s the move from answer engine to work engine (Shift 1), the SaaS data trap (Shift 2), the agent standards race (Shift 3), the maturation from vibe to engineering (Shift 4), or the Pentagon’s dependence on a single AI provider without a backup plan (Shift 5) — the common thread is organizational readiness.

The companies moving fastest with AI in 2026 are the ones who did the non-sexy work first. They organized their frameworks. They cleaned their data. They established consistent definitions. They built the harness before they reached for the model.

AI amplifies what’s already there. If what’s there is structured, the amplification compounds. If what’s there is a mess of disconnected half-thoughts and siloed systems, the amplification makes the mess louder and faster.

That’s what Pacer AI is built around: the operational data layer that makes AI amplification meaningful. Structured semantic models from your CRM, ERP, and HRIS. ARR waterfalls that reconcile at the source. Board decks that don’t require a human who knows where all the bodies are buried.

The infrastructure question is upstream of the model question. Always has been.

Will Sullivan — Founder, Pacer AI | getpacerai.com

Agents of Insight covers operational AI: what’s actually working for operators, what’s noise, and how to build toward durable AI leverage.

Subscribe at substack.com/agents-of-insight

If this issue was useful, forward it to one operator who’s still calling everything a paradigm shift.